Augmented learning is closely related to augmented intelligence ( intelligence amplification ) and augmented reality . Augmented intelligence applies information processing capabilities to extend the processing capabilities of the human mind through distributed cognition . Augmented intelligence provides extra support for autonomous intelligence and has a long history of success. Mechanical and electronic devices that function as augmented intelligence range from the abacus , calculator, personal computers and smart phones. Software with augmented intelligence provide supplemental information that is related to the context of the user.

Our software learns from you and slowly as you teach it, it helps you avoid the daily tasks. Eventually, we hope it learns enough to come up with new ways to solve your problems.

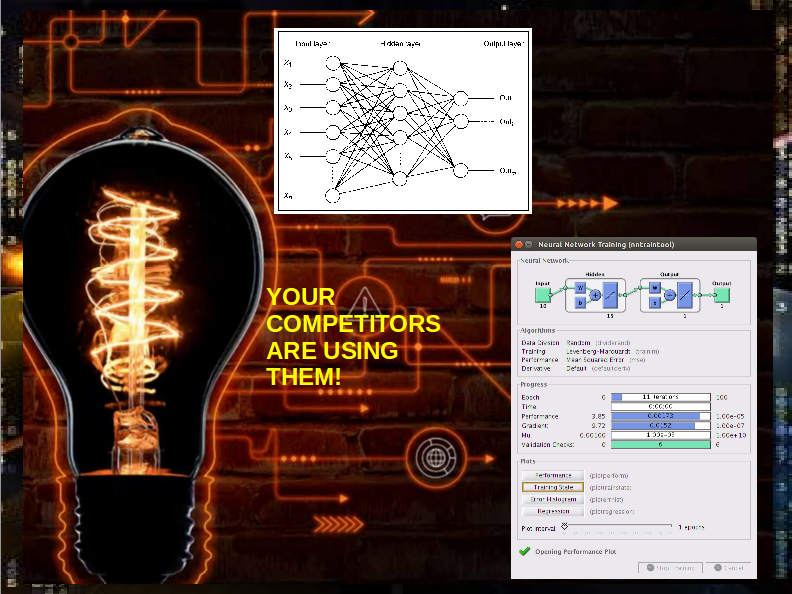

Yes, the large companies used them, by the thousands. Everything you buy online from Amazon, Netflix, Google is obviously AI driven. But there is more, Airlines, Banks, Insurance and Oil Companies all live and die by their AI.

Our augmented software provide services into basic areas of marketing, finance, production and media. We treat all our clients as individuals and don’t believe in a one-size-fits-all solution. We’ll develop a customized program augmented to help specific people in your company better fulfill, their roles. Our programs learn from you but we teach them your processes.

Our Bots are hard at work all over the internet providing content for newspapers and media companies worldwide, many are in gaming, also provide feedback on websites.

Read More about the differences between Artificial and Augmented intelligence